It’s been a while that i’ve been quite intensively playing with Deep Learning both for work related research and personal projects. More specifically, I’ve been using the Keras framework on top of a TensorFlow backend for all sorts of stuff. From big and complex projects for malware detection, to smaller and simpler experiments about ideas i just wanted to quickly implement and test - it didn’t really matter the scope of the project, I always found myself struggling with the same issues: code reuse over tens of crap python and shell scripts, datasets and models that are spread all over my dev and prod servers, no real standard for versioning them, no order, no structure.

So a few days ago I started writing what it was initially meant to be just a simple wrapper for the main commands of my training pipelines but quickly became a full-fledged framework and manager for all my Keras based projects.

Today I’m pleased to open source and present project Ergo by showcasing an example use-case: we’ll prototype, train and test a Convolutional Neural Network on top of the PlanesNet raw dataset in order to build an airplane detector for satellite imagery.

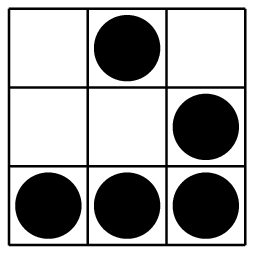

This image and the general idea were taken from this project, however the model structure, training algorithm and data preprocessing are different … the point of this post is, as i said, to showcase Ergo with something which is less of a clichè than the handwritten digits recognition problem with the MNIST database.

Prerequisites

First thing first, you’ll need python3 and pip3, download Ergo’s latest stable release from GitHub, extract it somewhere on your disk and:

cd /path/to/ergo

sudo pip3 install -r requirements.txt

python3 setup.py build

sudo python3 setup.py installIf you’re interested in visualizing the model and training metrics, you’ll also need to:

sudo apt-get install graphviz python3-tkThis way you’ll have installed all the dependencies, including the default version of TensorFlow which runs on CPU. Since our training dataset will be relatively big and our model moderately complex, we might want to use GPUs instead. In order to do so, make sure you have CUDA 9.0 and cuDNN 7.0 installed and then:

sudo pip3 uninstall tensorflow

sudo pip3 install tensorflow-gpuIf everything worked correctly, you’ll be able test your GPU setup, the software versions and what hardware is available with the nvidia-smi and ergo info commands. For example, on my home training server this is the output:

Airplanes and Satellites

Now it’s time to grab our dataset, download the planesnet.zip file from Kaggle and extract it somewhere on your disk, we will only need the folder filled with PNG files, each one named as 1__20160714_165520_0c59__-118.4316008_33.937964191.png, where the first 1__ or 0__ tells us the labeling (0=no plane, 1=there’s a plane).

We’ll feed our system with the raw images, preprocess them and train a CNN on top of those labeled vectors next.

Data Preprocessing

Normally we would start a new Ergo project by issuing the ergo create planes-detector command, this would create a new folder named planes-detector with three files in it:

prepare.py, that we will customize to preprocess the datasetmodel.py, where we will customize the model.train.py, for the training algorithm.

These files would be filled with some default code and only a minimum amount of changes would be needed in order to implement our experiment, changes that I already made available on the planes-detector repo on GitHub.

The format that by default Ergo expects the dataset to be is a CSV file, where each row is composed as y,x0,x1,x2,.... (y being the label and xn the scalars in the input vector), but our inputs are images, which have a width, a height and a RGB depth. In order to transform these 3-dimensional tensors into a flat vector that Ergo understands, we need to customize the prepare.py script to do some data preprocessing.

This will loop all the pictures and flatten them to vectors of 1200 elements each (20x20x3), plus the y scalar (the label) at the beginning, and eventually return a panda.DataFrame that Ergo will now digest.

The Model

This is not a post about how convolutional neural networks (or neural networks at all) work so I won’t go into details about that, chances are that if you have the type of technical problems that Ergo solves, you know already. In short, CNNs can encode visual/spatial patterns from input images and use them as features in order to predict things like how much this image looks like a cat … or a plane :) TLDR: CNNs are great for images.

This is how our model.py looks like:

Other than reshaping the flat input back to the 3-dimensional shape that our convolutional layers understand, two convolutional layers with respectively 32 and 64 filters with a 3x3 kernel are present, plus the usual suspects that help us getting more accurate results after training (MaxPooling2D to pick the best visual features and a couple of Dropout filter layers to avoid overfitting) and the Dense hidden and output layers. Pretty standard model for simple image recognition problems.

The Training

We can finally start talking about training. The train.py file was almost left unchanged and I only added a few lines to integrate it with TensorBoard.

The data preprocessing, import and training process can now be started with:

ergo train /path/to/planes-detector-project --dataset /path/to/planesnet-picturesIf running on multiple GPUs, you can use the --gpus N optional argument to detemine how many to use, while the --test N and --validation N arguments can be used to partition the dataset (by default both test and validation sets will be 15% of the global one, while the rest will be used for training).

Depending on your hardware configuration this process can take from a few minutes, up to even hours (remember you can monitor it with tensorboard --log_dir=/path/to/planes-detector-project/logs), but eventually you will see something like:

Other than manually inspecting the model yaml file, and some model.stats, you can now:

ergo view /path/to/planes-detector-projectto see the model structure, the accuracy and loss metrics during training and validation:

Not bad! Over 98% accuracy on a dataset of thousands of images!

We can now clean the project from the temporary train, validation and test datasets:

ergo clean /path/to/planes-detector-projectUsing the Model

It is possible now to load the trained weights model.h5 file in your own project and use it as you like, for instance you might use a sliding window of 20x20 pixels on a bigger image and mark the areas that this NN detected as planes. Another option is to use Ergo itself and expose the model as a REST API:

ergo serve /path/to/planes-detector-projectYou’ll be able to access and test the model predictions via a simple:

curl http://127.0.0.1:8080/?x=0.345,1.0,0.9,....__

As usual, enjoy <3